EARs Deployed on WebSphere does not show as started

Hi All,

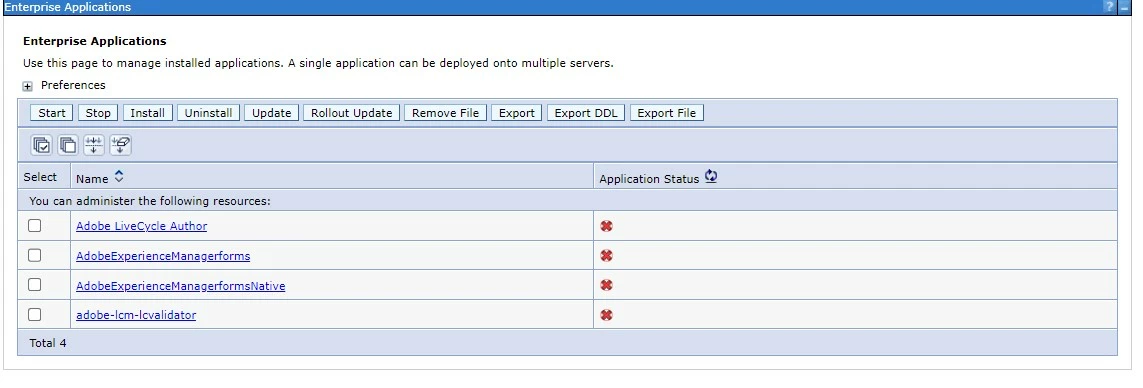

I have an AEM instance deployed on WebSphere Stack. Everything works as expected as per AEM side, but in WAS console, I see the status of all 4 EARs as down.

I have verified in the logs while startup of the JVMs, all 4 EARs starts successfully.

Complete Application Stack details :

-AEM On-Premise 6.5

-AEMForms-6.5.0-0040

-WebSphere Base 9.0.5.11

-Linux RedHat_79

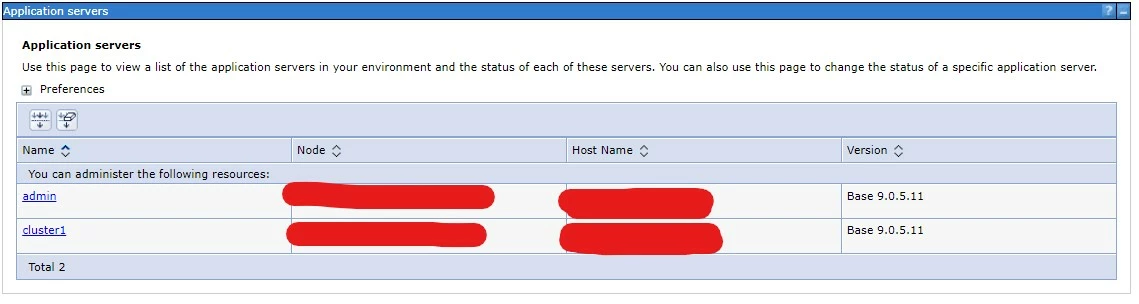

Application servers are :-

AEM is installed on cluster1 JVM, while the admin is for WebSphere by default.

Note :- This is a stand Alone setup, not Clustered one.

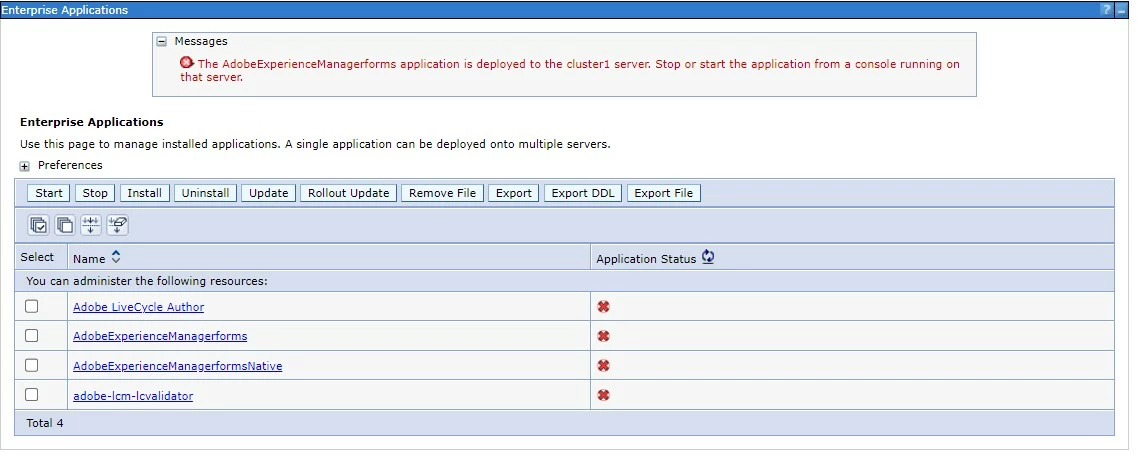

If I try starting up any application or stopping it from the WAS console, it throws error like following :

I am not aware of any console running on cluster1. This makes any action from WAS console as useless, like creating a heapdump/ uninstalling any application/monitoring logs.

Is there any config missing from WAS or during deployment of these EARs from Configuration manager there is a trick involved?

Any insight is appreciated.

Thanks,

Rajat