Hi guys,

I am fairly new to using the Data Sources API and have jusr created a NodeJS importer that queries GSC data and is pushing that data into a data source.

Data import works great so far, and I have imported all 2023 data.

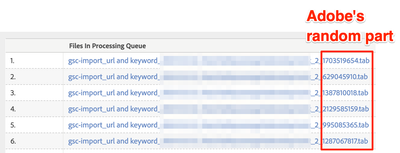

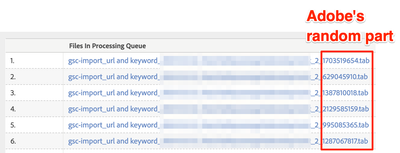

My client has a fairly big website with large numbers of entries per day (25k+) that requires breaking the import into multiple chunks per day (typically 3) which leaves me at 1000k import jobs so far.

To not accidentally import the same day data twice, I have created a failsafe that is first querying previous job names to see whether the planned day to import has already been processed.

This I do using the GetJobs function

https://api.omniture.com/admin/1.4/rest/?method=DataSources.GetJobs

And here is where the issues start.

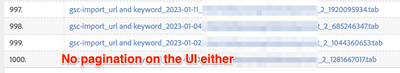

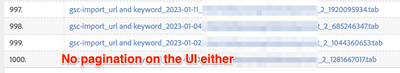

This function only returns a maximum of 1000 jobs and my imports run twice because previous imports cannot be detected. Luckily, this is currently on a non-prod report suite.

Does anyone know whether

- there is a pagination in the API call I could use e.g. a rowStart or similar?

- alternatively, a way to filter the job names? There is a "filters" I can set with a "name" array, but it seems like you will have to know the exact name of the import job, that includes a random part that Adobe is adding to the file name that they create during the processing which defeats the purpose of a filter and is absolute nonsense if you ask me. How can you apply a filter if you don't even know what to look for...?!

Cheers

Björn

Cheers from Switzerland!