Analytics Implementation Risk Mitigation

- April 8, 2021

- 5 replies

- 4361 views

Life is full of risks. So are technology projects. If you ever saw the Ben Stiller movie Along Came Polly (maybe binge-watching movies during the pandemic), you got to meet Rueben Feffer, a man who assesses risk for a living using a risk management software program.

In some ways, I can relate to the movie’s risk management character. As some of you know, when I worked at Omniture, one of my roles was helping customers fix broken analytics implementations. As I performed this role, I observed many key risk points associated with digital analytics implementations. As I have mentioned in past blog posts, many digital analytics implementations fail or underperform for various reasons, including consequential decisions made or approaches taken at these key risk points. Whether you work for an organization managing your digital analytics implementation or at an agency that helps clients with analytics implementations, understanding the key places that lead analytics programs astray is important.

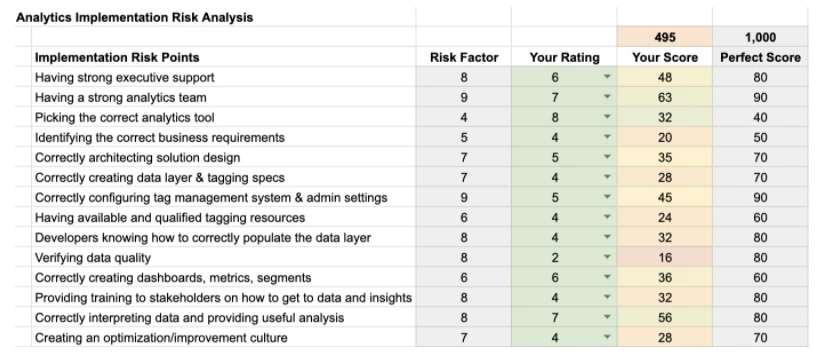

In this post, I will share some of the key risk points that I have encountered over the years. As I describe these risk points, I will rate each on a scale of one to ten. If something is rated low, it means that mistakes made will not be as damaging as a higher rated item. I provide this scale to help you focus on the most impactful items when determining whether an analytics implementation will or will not be successful.

While this post’s content can apply to anyone associated with a digital analytics implementation, I’m writing it primarily for those who lead or sponsor digital analytics at organizations. Marketing leaders at organizations are ultimately responsible for ensuring that digital analytics programs and implementations generate value, and, as such, they should be keenly aware of these potential risk factors. They are also the folks most likely to be in a position to rectify any deficiencies in the cited areas.

Digital Analytics Implementation Risk Factors

- Having strong executive support (Risk Factor = 8/10)

If your organization doesn’t have executives who believe in using data to improve conversion, you can spend a lot of time and effort going nowhere. Imagine hiring a full team, selecting an analytics tool, doing an implementation, and then producing analysis, only to have it be ignored. I have seen this happen many times. My dad used to say, “Before you start climbing up a ladder, make sure it is up against the right wall!” So before you go too far, make sure you understand where your executive team stands on the idea of data-driven decisions. Can they demonstrate that they effectively leveraged data to make decisions? Do they understand the basics of digital analytics data? Are they data-literate? Of course, you don’t want an organization that only makes decisions based on data, but if they only use data 10% of the time, your risks of failure (and frustration) go up dramatically.

- Having a strong analytics team (Risk Factor = 9/10)

Even though our industry tends to focus a lot on tools and technologies (myself included!), the caliber of the people on your digital analytics team is an essential factor in success. I have seen great teams with bad analytics implementations outperform mediocre teams with stellar analytics implementations. You need people to take data and turn it into insights and knowledge. Make sure you invest in people who have the skills they need (technical, analysis, presentation), not just tools and technology.

- Picking the correct analytics tool (Risk Factor = 4/10)

While this is surprising to many folks, picking the correct digital analytics tool is the lowest on the risk factor list. Even though organizations spend a lot of time debating and choosing digital analytics tools (and unnecessarily switching analytics tools), which tool you use will not make that much difference in the end. This is because many of the tools available have similar features and because most organizations don’t take full advantage of the advanced features offered by superior tools. While some digital analytics tools and ecosystems can do much more than others, it is more important that your team knows how to use the tool it owns than to have the ultimate tool. If you think you need a more robust tool than you have now, my advice is to solve all of the other risk factors on this list and then consider changing tools when you are more advanced.

- Identifying the correct business requirements (Risk Factor = 5/10)

As my recurring blog readers know, I am a fan of defining business requirements before you do too much implementation work. I like to understand what business questions will be answered ahead of time to ensure that time isn’t wasted making solution designs, data layers, tags, and performing QA for items that no one wants. I have found that a little time spent upfront can save a lot of time down the road. The failure to correctly identify business requirements is a place where I see organizations get derailed and overall implementations suffer. So take the time to define your business requirements to reduce the risk that your implementation won’t align with your stakeholders’ needs and that your analytics team is laser-focused on the most critical business questions.

- Correctly architecting solution designs (Risk Factor = 7/10)

Digital analytics solution designs are blueprints for how business questions will be architected in a digital analytics tool. I like to think of them as translations of business questions into data events and attributes that a digital analytics tool will use to collect data and ultimately provide answers. In fact, the best solution designs map business requirements directly to the events and attributes that will be used to address them. Unfortunately, it is both an art and a science to translate business questions into solution designs effectively. It’s common to see different people architect entirely different solutions to the same business question. If your analytics team (or your consulting firm) doesn’t know how to correctly architect solutions to stakeholder business questions, you can end up with inaccurate or incomplete data. That can result in misinformation that is detrimental instead of helpful. Since everything in your implementation will be built upon the solution design, it is a key risk point as a weak solution design significantly limits downstream analysis capabilities.

- Correctly creating data layer & tagging specs (Risk Factor = 7/10)

Once you have a solution design, the next step is creating the data layer and the accompanying tagging specifications. This is where the “rubber hits the road,” as they say. I have seen great business requirements and solution designs die a slow death by poor data layers and tagging approaches. Even worse, I have seen many implementations break and lose data altogether because the underlying data layer and tagging specifications were inflexible, hard-coded, or reliant on DOM scraping.

- Correctly configuring tag management system & admin settings (Risk Factor = 9/10)

Nowadays, it is unheard of to implement analytics without a tag management system. But ensuring that your solution design, data layer, and analytics tool variables are all aligned is arduous and time-consuming. There are many facets of correctly configuring a tag management system, and most of these have traditionally been done by hand. This exposes your analytics implementation to a LOT of risks. It is very easy to break your implementation by adding the wrong data layer element, variable, or tag management rule. Plus, your implementation’s ongoing maintenance requires you to continuously modify your tag management setup, creating even more opportunities for mistakes. Analytics tools also have administration areas where you have to configure the data points (variables) specific to your implementation. If you make mistakes in these settings, it can dramatically impact your data collection and downstream analyses.

- Having available and qualified tagging resources (Risk Factor = 6/10)

I work with many organizations that don’t have access to the internal development resources they need to implement digital analytics. Even if they have their data layer and tagging specifications defined, development resources are so scarce that they have to wait weeks or months to get their time to tag the site or populate the data layer. This lack of resources makes their analytics implementation less agile, which means that they cannot be as responsive as they would like to be with internal analytics stakeholders. While this item is hard to mitigate against, I have found that being proactive about the preceding steps and having a steady pipeline of tagging requests available at all times allows you to capitalize on the instances where you do have development resources available.

- Developers knowing how to correctly populate the data layer (Risk Factor = 8/10)

Once you do get time from developers, you need to make sure that they understand exactly what you need them to do. In many cases, even though they know what is on the website or mobile app, they are not familiar with data layers or digital analytics tools. Therefore, it is critical that you over-document what is needed and provide this documentation in the tools they are used to (i.e., GitHub, Jira, etc.). I have seen many cases in which organizations wait months to get developers’ time, only to find that they don’t have what they need or misinterpret things, make mistakes, and are then unavailable for another period of weeks or months!

- Verifying data quality (Risk Factor = 8/10)

As I have blogged about in the past, having digital analytics implementations with poor data quality can be worse than having no analytics implementation at all. Unfortunately, verifying data quality is difficult, manual, and time-consuming. The best time to verify data quality is as developers are completing their development, but I often see organizations check data quality too late when making fixes is more difficult. Having poor data quality is a high risk to the overall analytics implementation. When stakeholders encounter data quality issues, they are hesitant to make business decisions on your analytics data.

- Correctly creating dashboards, metrics, segments (Risk Factor = 6/10)

After you have implemented (either initially or incremental updates), you will likely need to create reports, dashboards, metrics, segments, etc., to take action upon your data. Luckily, these are pretty easy to make in modern digital analytics tools, but they do take time nonetheless. If you implement but don’t make it easy for your users to find the information they need or create useful reporting, all of the preceding work will have been for nothing.

- Providing training to stakeholders on how to get to data and insights (Risk Factor = 8/10)

Since your analytics team can’t be in all places at all times, at some point, you have to teach your stakeholders how to get their own data. This means you need to train them to use your digital analytics tool. Many organizations fail to train their users, which means that they are inundated with analysis requests, which causes delays in delivering useful data and insights. In addition to training stakeholders on the analytics tool, it is arguably more important to train them on your bespoke analytics implementation. Your implementation will likely have events and attributes that make sense to your analytics team but are confusing to casual analytics users. For example, if you have a count of “Leads” in your implementation, your users may not understand exactly what qualifies a prospect as a “Lead.” Setting stakeholders loose on analytics implementation without context can be like giving someone a rifle without teaching them how to shoot!

- Correctly interpreting data and providing useful analysis (Risk Factor = 8/10)

As you have likely heard, digital analytics is supposed to be more than just providing data. No one likes to have reports thrown in their face on a weekly basis. Therefore, the analytics team’s true goal should be to provide actionable insights from the data. I often see organizations only providing data instead of taking it to the next level. The reality is that many casual data users (your stakeholders) may struggle to turn data into insights, so this is an area where your team can add value. I rate this as a higher risk point to emphasize how important it is that, one way or another, your organization focuses on insights instead of data. Failing to do this will regulate your team to “cost center” status instead of “profit center” status.

- Creating an optimization/improvement culture (Risk Factor = 7/10)

The final risk point focuses on the general culture of analytics and optimization. I have seen that many organizations have digital analytics teams that are reactive instead of proactive. They report what is happening but don’t really help the organization see what could be happening if they tried new things. The most successful organizations have built a culture of experimentation, creating expectations that the analytics team will always try new things and see which ideas lead to incremental improvements. Suppose your analytics team can reduce the implementation’s overall risk per the steps above. In that case, you should be able to build the goodwill needed to transition into an optimization culture, which is when digital analytics becomes much more fun!

As you can see, there are a handful of key points within the implementation process where you can significantly increase or decrease the odds of your success. While there is never a guarantee that an organization will be successful with an initiative like digital analytics, I have found that you can greatly increase or decrease your chances of success by being proactive regarding this list’s items.

To that end, I have created a simple spreadsheet that you can use to rate your team on these key risk steps and see where you stand. This spreadsheet allows you to rate your analytics program/implementation on the preceding risk items and then calculates your risk score.

If you’d like to create your own analytics implementation risk assessment score, you can access the spreadsheet and make a copy of it here.

Apollo – Risk Mitigation Tool!

If you have been reading some of my recent posts, you know that I have been working on a new software product called Apollo. Apollo is a new type of software called an analytics management system that improves and automates much of the analytics implementation process. It is no coincidence that many of Apollo’s core features help minimize the risks I have seen be associated with digital analytics implementations. Here are just a few examples:

- The business requirements library reduces the risk of not having any or proven business requirements for your analytics implementation

- The best practice solution designs help ensure that you are answering business requirements with approaches blessed by me and other industry experts

- Dynamic data layer and tagging specifications reduce the time and effort needed to turn solution designs into tags while also applying proven data layer best practices

- Automatic tag management configuration allows you to create or update tag management rules, data elements and environments in seconds, while guaranteeing that all elements are correctly related to the solution design, data layer and tagging specifications

- Administration setting synchronization provides an easy way to make sure that the data points needed in your analytics tool are provided with the click of a button

- Data quality verification is automated and directly connected with the same data layer and tagging specifications created by Apollo

- Automated Conversion Metrics and Dashboards allow you to get data into the hands of your stakeholders quickly and easily

Over the past few years, we have used Apollo to help improve and expedite digital analytics implementations. At least half of the key risk points described above can be improved with Apollo. Here is an example of the risk analysis spreadsheet shared above with example Apollo improvements:

Using this middle of the road implementation, Apollo can increase the chances of implementation success by more than one-third! The lower the current implementation scores, the more Apollo can help, which, in the following case, could increase the chances of success by almost sixty percent:

Summary

Hopefully this post inspires you to think about the key risk points in your digital analytics implementation. If you are honest with yourself about where your analytics program and implementation are today, you can take steps to mitigate risk and increase your chances of success. Hopefully the risk factors described above, all learned in the trenches of hundreds of analytics implementations, can help you focus your time on the ones most appropriate for your organization.