How to get intelligent image metadata with AEM as a Cloud Service | AEM Community Blog Seeding

How to get intelligent image metadata with AEM as a Cloud Service by Cognifide Tech Blog Posts

Abstract

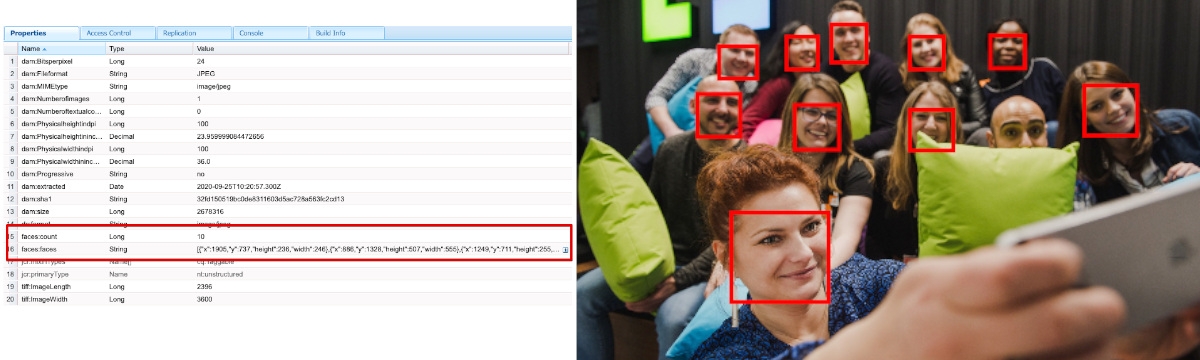

Image renditions are not the only actions to perform on your AEM assets. Another essential aspect is asset metadata. Depending on the usage, metadata can drive your brand taxonomy, can help authors find the asset or finally be the driver for your asset brand governance. Thanks to the Asset Compute service being part of AEM as a Cloud service, you can generate custom renditions by extracting important features from your assets using intelligent services. In my previous post, that was about how to generate intelligent renditions with AEM as a Cloud Service, I showed how to build an Asset Compute worker that generates custom renditions, driven by intelligent services. In this post, I'm going to show how to implement a worker that generates custom metadata. It is a relatively easy task, as the worker implementation is pretty much the same, only the response is different. Instead of the asset binary, it must be XMP data structure serialized into an XML file. I've previously explained how Asset Compute Service works and the way data flows across the layers. For metadata workers, things are quite similar. The only difference is the outcome of the custom worker, which is an XML file instead of the asset binary. As the XML document contains asset metadata, it has to conform to the XMP specification. Once again, I used imgIX as my intelligent service. But this time, I used a function that detects faces in the image. That function is looking for coordinate data for the bounds, mouth, left eye, and right eye of each face and adds it to the JSON output. Since, we're interested in the face bounds only, other data is going to be ignored. This data is about to be stored in AEM as new metadata fields: faces:count and faces:bounds. However, instead of just showing them as yet another field in the AEM metadata editor, I created a custom component to visualise those regions. And here is the result for a sample asset.

Read Full Blog

How to get intelligent image metadata with AEM as a Cloud Service

Q&A

Please use this thread to ask the related questions.