Resend files from Datawarehouse export after segment update

Hi all,

I created a scheduled export in the Datawarehouse using a segment as filter.

It runs several time before I noticed an adjustment that has to be done in the segment I used to filter.

To avoid to recreate my DWH export, I did the modification directly in the segment and keep running the same DWH export..

My questions are:

- will the segment updated be taken into account in the next runs of my export. I think so but you'll be great if you can confirm.

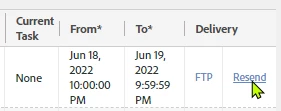

- will it also be ok if I use the option Resend files for the previous runs in the DWH interface to send now the correct files from the past

or the file is just resend without any calculation update, or using the segment definition in scope at this period?

I tried and the segment modification are not taken into account for files Resend.

Maybe do I have to wait a specific time to synchronize segment update with the export?

Thanks for your help

Robin