Solved

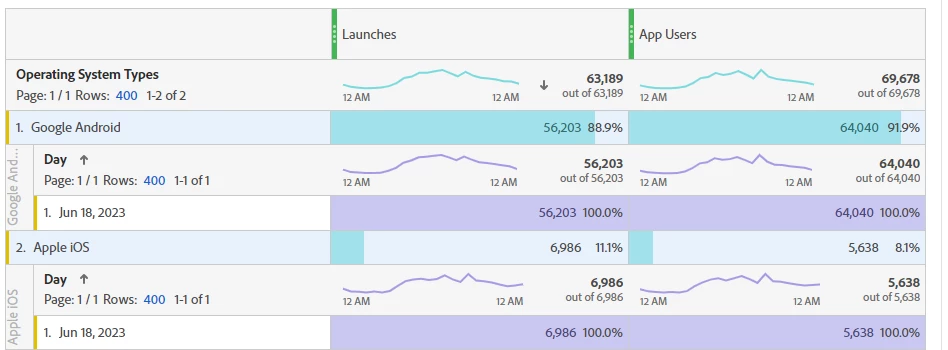

Launches are lesser than unique visitor and visits

Hi,

from last 12 month launches are lesser than unique visitor . in which scenario this is happens .

even background data is not too much.

As per observation it not working properly for Android but ios its working good.

Please provide me better solution.