How is conversion measured in A/B testing?

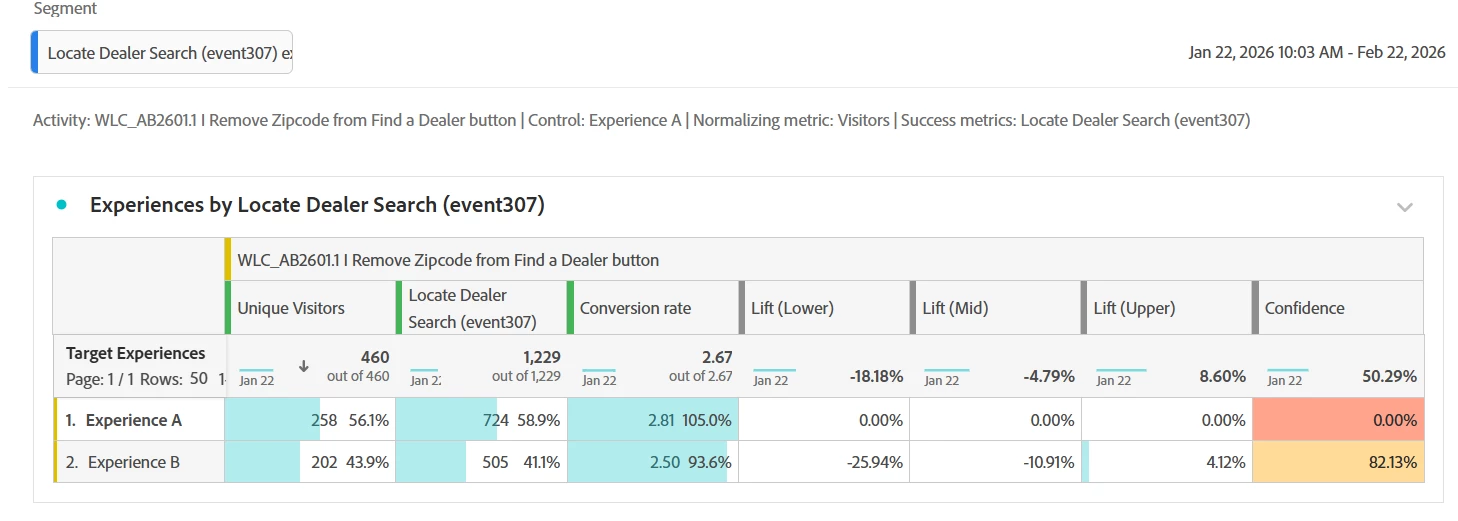

In the current A/B test (screenshot below), our team is perplexed as to why Experience B has the higher confidence rate while Experience A has the higher number of conversions. We are using Locate Dealer Search as our Segment, with our ultimate goal being the completion of a form on the dealer search page.

Any input from experts on why/how conversions are measured? Did we structure the test correctly?