Workflow trigger after multiple workflows end

![]()

- Mark as New

- Follow

- Mute

- Subscribe to RSS Feed

- Permalink

- Report

Hi all,

I have to start a technical workflow, which exports data to CRM, after other smaller workflows end their activities.

The smaller workflows update data internally, so I have to export data to CRM only after all these technical workflows end their updates.

The best way I find to solve this problem, making it scalable for future, is exploiting logs.

The log structure is like "Action_count , Flag_export".

Every small workflow when ends it's activities, increments by one the Action_count and than checks if Action_count is equal to the number of workflow inside a specific folder. If yes, it turns Flag_export to 1.

On the other side, the main workflow, wait until the Flag_export become 1.

This way I have only to remember to put new "small" workflow in that folder and automatically the main workflow will wait until the x+1 smaller workflows end.

Is there a greatest way to manage this case?

Thanks in advance and best regards,

Luca

Solved! Go to Solution.

- Mark as New

- Follow

- Mute

- Subscribe to RSS Feed

- Permalink

- Report

Combine all the small workflows into one big one, this is optimal across constraints- reliability, complexity, maintainability.

Maintaining counting semaphores with logs and js runs counter to these goals and will exist as a chronic challenge for your users.

- Mark as New

- Follow

- Mute

- Subscribe to RSS Feed

- Permalink

- Report

Hi,

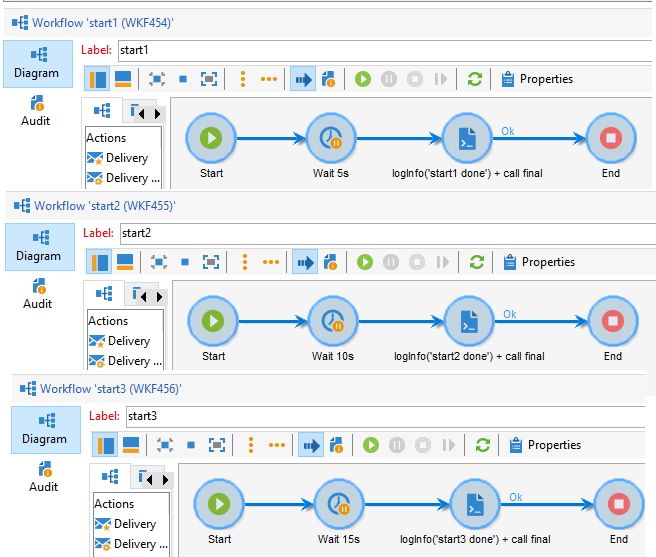

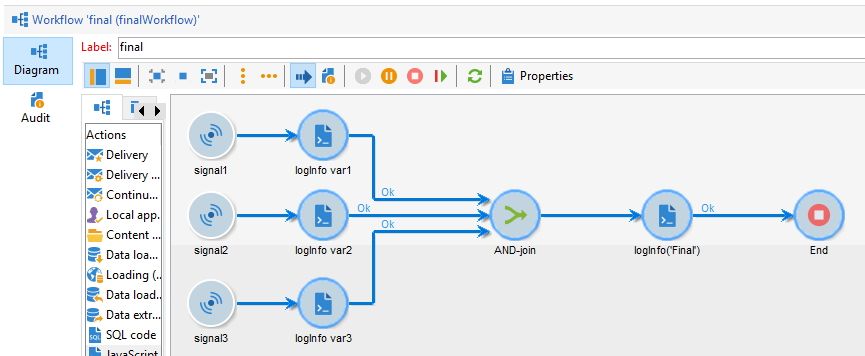

I think you can leverage the use of xtk.workflow.PostEvent() in your first wfs, that all call a different "signal" in your final workflow:

Let's say you have 3 workflow that need to be done: "start1", "start2" and "start3":

And your final workflow looks like this:

The JS code for start1/2/3:

// start1

logInfo('start1 done');

var params = <variables var1="hello"/>;

xtk.workflow.PostEvent("finalWorkflow", "signal1", "", params, false);

// start 2

logInfo('start2 done');

var myVar = "var myVar";

var params = <variables var2={myVar}/>;

xtk.workflow.PostEvent("finalWorkflow", "signal2", "", params, false);

// start3

logInfo('start3 done');

var myObject = {key:"value"};

var params = <variables var3={myObject.key}/>;

xtk.workflow.PostEvent("finalWorkflow", "signal3", "", params, false);

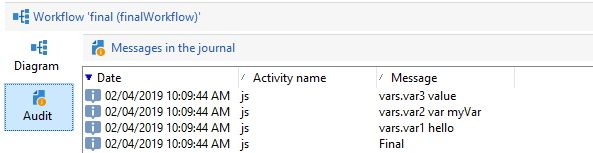

You'll end up with:

Views

Replies

Total Likes

![]()

- Mark as New

- Follow

- Mute

- Subscribe to RSS Feed

- Permalink

- Report

Hi Florian,

thank you very much for your advice and so your time.

I really like your solution but this way every time I want to develop a new "small" wf, I have to add to the "main" one the signal and the js activities.

Like the another solution I found (every "small" wf at its end calls the following small one. The last "small" wf will call the main wf) they are not too scalable for future improvement, keeping in mind that future developments will be made by business accounts: not too confident with platform customization.

Finally, in my humble opinion, the best solution (only in terms of simplicity and scalability) remains my first one.

What do you think about it?

Many thanks,

Luca

Views

Replies

Total Likes

- Mark as New

- Follow

- Mute

- Subscribe to RSS Feed

- Permalink

- Report

Combine all the small workflows into one big one, this is optimal across constraints- reliability, complexity, maintainability.

Maintaining counting semaphores with logs and js runs counter to these goals and will exist as a chronic challenge for your users.

![]()

- Mark as New

- Follow

- Mute

- Subscribe to RSS Feed

- Permalink

- Report

Hi Wodnicki,

thank you for your reply.

I would avoid to combine all the small wf (not too small, but smaller than the main one) into a big one because if they fail their execution I have to restart each of them manually and keep monitoring them until their end.

For this case, this solution seems to be too tricky to maintain.

Thank you very much,

Luca

Views

Replies

Total Likes